A Bengaluru techie’s easy picture edit request went viral after Gemini AI delivered an unexpectedly literal consequence that amused customers on-line.

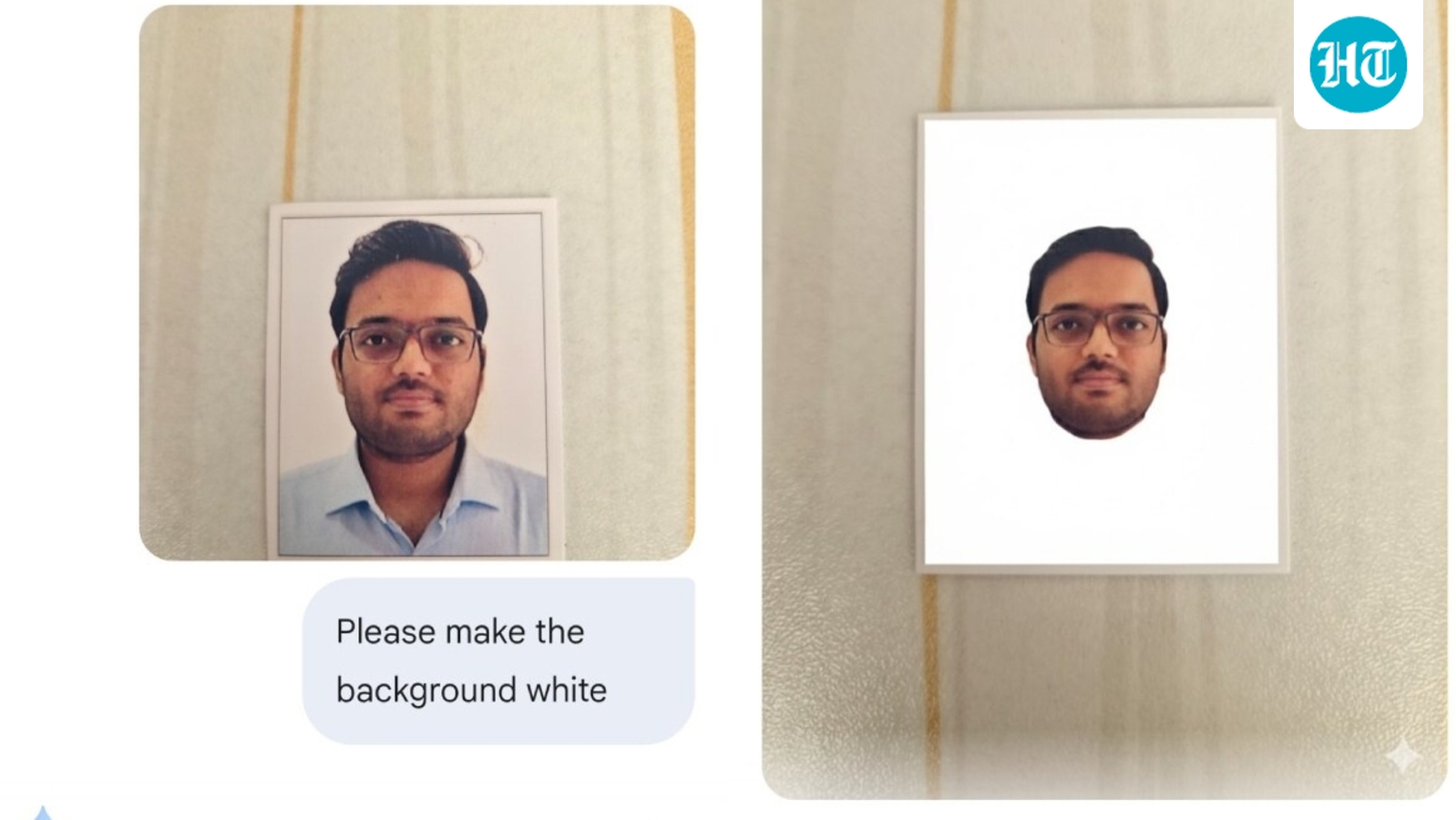

A Bengaluru techie requested Gemini AI to whiten a background, however the device produced an oddly edited picture that sparked laughter throughout social media.(X/@jn_dubey)

A request for a white background turns right into a floating head

Dubey shared the earlier than and after screenshots on X, displaying his unique passport model {photograph} on the left and Gemini’s interpretation on the precise. The AI produced a very white background, however within the course of trimmed away the remainder of Dubey’s physique, leaving solely his head suspended within the center. He posted the photographs with the caption, “Very good @GeminiApp,” which rapidly set the tone for the playful reactions that adopted.

Check out the publish right here:

Google Gemini responds to the viral second

The official Google Gemini App account additionally stepped in to touch upon the viral publish. It wrote, “We perceive your difficulty, Aditya. Please strive rephrasing your immediate with extra particular directions, this can assist Gemini reply extra precisely. If the problem persists, please ship suggestions out of your system by tapping high proper nook preliminary> Feedback. Hope this helps.”

In response, Dubey replied, “I do know da.. simply kidding.”

Internet reacts with humour and relatable frustration

In the feedback part, one consumer wrote, “Thankfully garland nahi dala” whereas one other remarked, “Damnnn It simply saved your face.” A 3rd consumer shared, “You most likely simply want to present it clearer context and extra particular directions” as others saved the humour going. Another joked, “It gave you a haircut as a substitute, superior!” whereas two extra customers wrote, “Cannot cease laughing” and “This is so hilarious” capturing the general temper of the dialogue.

(Disclaimer: This report relies on user-generated content material from social media. HT.com has not independently verified the claims and doesn’t endorse them.)